OpenAI Codex pricing: API costs, container billing, and how it stacks up against Claude Code

OpenAI's Codex is not the code-completion API from 2021. It's a multi-agent coding assistant that runs in cloud containers. The billing model adds container fees on top of token costs, which is worth understanding before you scale up API usage.

Image: OpenAI / The Decoder

- gpt-5.2-codex costs $1.75/$14.00 per million tokens -- input is about 40% cheaper than Sonnet 4.6, output is nearly the same

- Container fees add $0.03 to $1.92 per task on top of token costs, scaling with repo size

- Plus subscription ($20/mo) covers most individual workloads; API billing only makes sense at scale or for pipelines

What "Codex" means in 2026

OpenAI retired the Codex name in 2023 when it deprecated the original code-completion API. It came back in May 2025 as something different: a cloud-hosted multi-agent coding assistant built on GPT-5.x, with a dedicated fine-tuned model called gpt-5.2-codex.

The difference from calling GPT-5.4 directly is the execution environment. Codex runs in isolated containers with full repo access, a shell, a test runner, and file I/O. You hand it a task and it works autonomously -- reads your codebase, runs tests to verify fixes, commits changes. GPT-5.4 via API is tokens in, tokens out. You supply the scaffolding yourself.

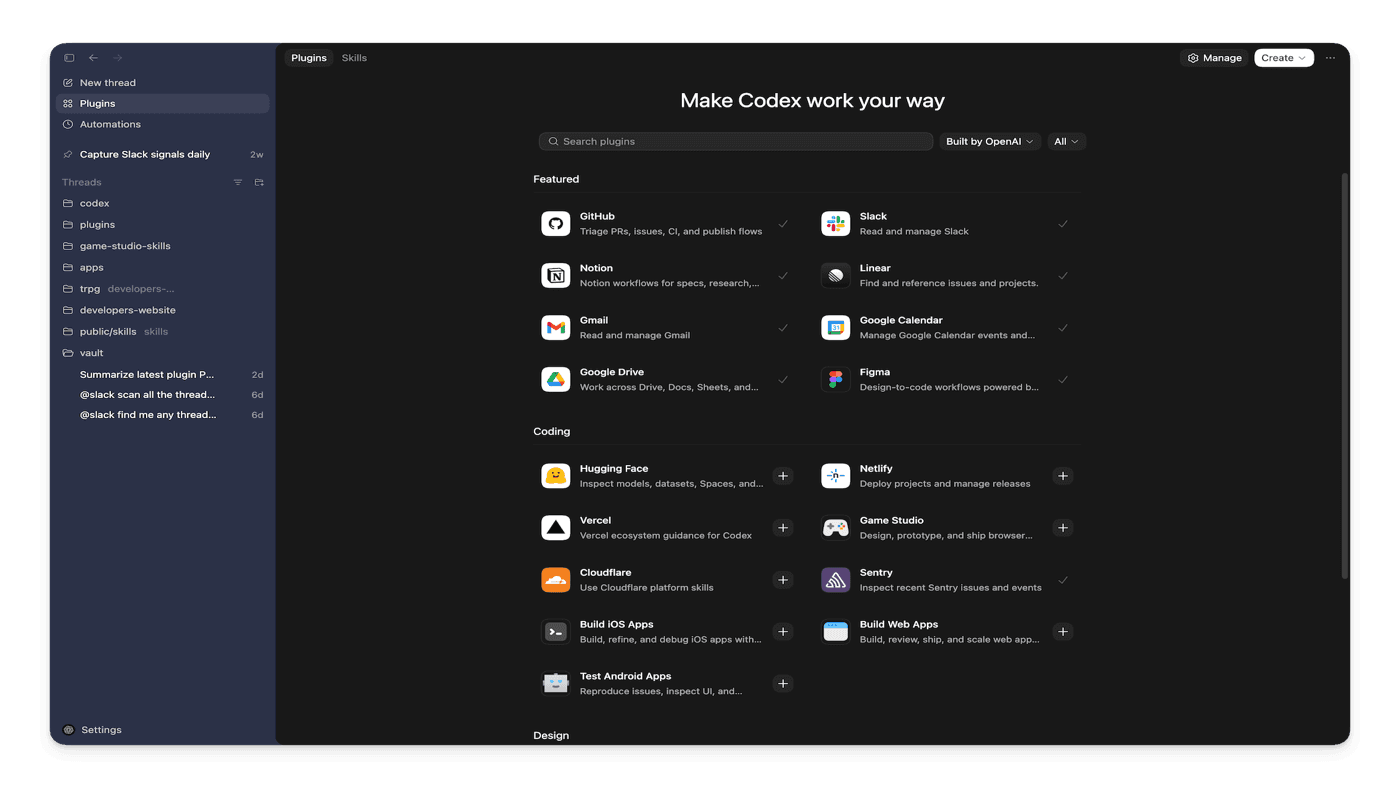

March 27 added a plugins marketplace with Slack, Figma, Notion, Gmail, and Google Drive integrations, plus an overhauled multi-agent coordination system where agents reference each other by path-based addresses instead of raw UUIDs. OpenAI disclosed 1.6 million weekly active users as of early March 2026.

API pricing

If you use Codex programmatically, you pay for tokens plus container fees. The coding-specific model is gpt-5.2-codex.

| Model | Input / 1M | Cached input / 1M | Output / 1M | Priority (2x) |

|---|---|---|---|---|

| gpt-5.2-codex | $1.75 | $0.175 | $14.00 | $3.50 / $28.00 |

| gpt-5.4 | $2.50 | $0.25 | $15.00 | $5.00 / $30.00 |

| gpt-5.4-mini | $0.75 | $0.075 | $4.50 | $1.50 / $9.00 |

Batch processing (async, 24h turnaround) is 50% off all models. Source: OpenAI API pricing

Container billing

Codex containers are billed separately from token usage. According to OpenAI's pricing documentation, the current model charges a flat fee per container created. A 4 GB container runs $0.12 per task and a 64 GB container runs $1.92. For most tasks the container fee is small relative to token costs, but for long-running agents on large repos it adds up.

| Container size | Cost per container | Typical use |

|---|---|---|

| 1 GB | $0.03 | Small scripts, single files |

| 4 GB | $0.12 | Typical medium repos |

| 16 GB | $0.48 | Large monorepos |

| 64 GB | $1.92 | Very large codebases |

Source: OpenAI pricing docs, Tools section.

Subscription plans

Most developers use Codex through a ChatGPT subscription rather than raw API. Limits are tasks per 5-hour rolling window, not daily caps.

| Plan | Price | Cloud tasks / 5h | Local tasks / 5h |

|---|---|---|---|

| Plus | $20/mo | 10-60 | 45-225 |

| Pro | $200/mo | 50-400 | 300-1,500 |

| Business | $30/user/mo | 10-60 | 45-225 |

Task limits vary by complexity. Pro users running many large cloud tasks per day may hit the rolling window limit and need to top up with API credits.

Codex vs Claude Code: cost comparison

We ran a full breakdown of Claude Code costs earlier today. The short version: Claude Code uses Sonnet 4.6 ($3.00/$15.00 per million tokens) or Opus 4.6 ($5.00/$25.00), with no session fee or container charge. You pay for tokens only.

Codex charges tokens plus containers. gpt-5.2-codex at $1.75 input is cheaper than Sonnet 4.6 at $3.00, and output ($14.00 vs $15.00) is close. The container overhead is the main variable to account for.

| Task type | Codex API (tokens + container) | Claude Code (Sonnet 4.6) | Notes |

|---|---|---|---|

| Small bug fix (50K ctx, 5K out) | $0.16 + $0.03 container | $0.23 | Codex cheaper by ~$0.04 |

| Medium feature (200K ctx, 20K out) | $0.63 + $0.12 container | $0.90 | Codex ~$0.15 cheaper |

| Large refactor (500K ctx, 50K out, 40 min) | $1.58 + $0.24 container | $2.25 | Codex still cheaper |

| Heavy session (1M ctx, 100K out, 2h) | $3.15 + $1.44 container | $4.50 | 16 GB; gap narrows to ~$0.09 |

On a 2-hour session with a 16 GB container, Codex API comes out at $4.59 vs Claude Code at $4.50 -- effectively the same once container fees are included. If context grows across turns, the Codex input savings erode further. For short, contained tasks, Codex API is meaningfully cheaper. For marathon sessions, the gap closes.

Coding performance

On SWE-bench Pro -- the harder variant that tests against real GitHub issues -- gpt-5.2-codex scores 56.8% and GPT-5.4 scores 57.7%. On Terminal-Bench 2.0, which tests fully autonomous terminal tasks rather than single-file diffs, gpt-5.2-codex reportedly beats Claude Opus 4.6 by 12 percentage points.

Benchmarks smooth over variance. Whether gpt-5.2-codex outperforms Sonnet 4.6 on your specific codebase is something to test. The container environment does real work -- repo access, test execution, iterative fixing -- and that is genuinely different from prompt-and-response coding tools.

Which one to use

For individual developers, the $20/mo Plus subscription is the sensible starting point for Codex. You get 10-60 cloud tasks per 5-hour window, which covers a normal day of autonomous coding tasks. Only move to API billing if you are automating at scale or building multi-agent pipelines.

If your team already pays for ChatGPT Pro ($200/mo), Codex is included. The incremental cost is zero unless you hit task limits. That changes the comparison entirely versus stacking raw API rates.

Claude Code is always pay-per-token via API (or included in Max plan subscriptions). The billing is simpler to reason about for budget planning -- no container fees, no session boundaries. Prompt caching drops real session costs significantly on large codebases, something Codex also supports at 10% of input price on cache hits.

Set a billing alert if you run long agentic tasks via the Codex API. Container fees compound on large repos and can be easy to miss if you are used to pure token billing.

Compare Codex costs against other models